- Chemistry & Biotechnology

- Constructional Engineering & Architecture

- Electrical Engineering & Electronics

- Energy & Environment

- Engineering

- Information and Communication Technologies

- Machinery & Plant Engineering

- Materials & Material Engineering

- Measurement Technology & Microsystems Technology

- Medical Technology

- Nutrition & Health

- Optics

- Pharma & Medicine

- Process- & Automation Technology

- Traffic & Mobility

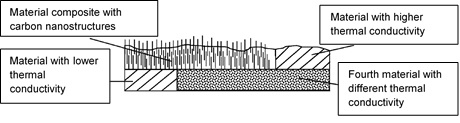

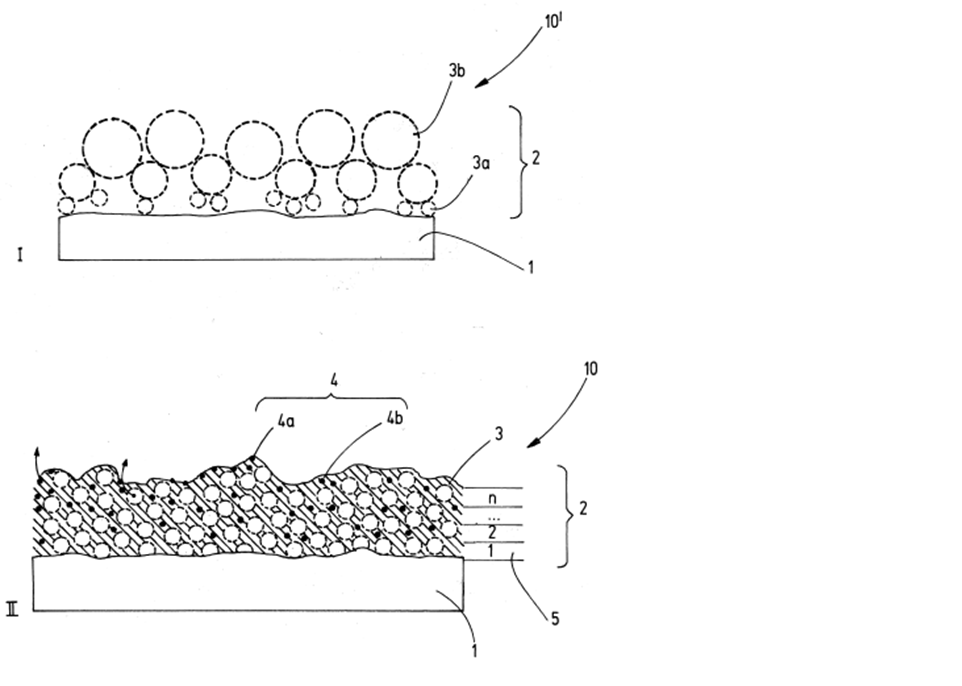

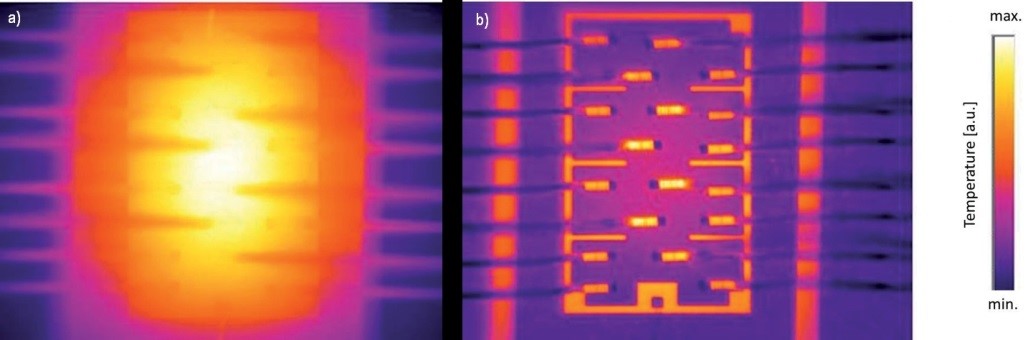

Wherever heat is generated in electronic components as a result of power loss, this must also be dissipated in order to prevent the components from overheating. The invention relates to a method for producing a composite material (metals and carbon), a composite material and a use of the composite material as a heat conductor as well as a heat exchanger. It enables an increase in the interface area and/or contact area of a redetachable and reusable thermal interface, thereby increasing the heat flow between two surfaces.

To produce clear, brilliant beers, wines or juices, they must be filtered. Clarifying agents can be used for this purpose to increase the filtration performance and shorten the production time of beverages. By using defined amounts of certain pectins and, if necessary, in combination with gallotannins, the two decisive process parameters of filtration, non filtrate turbidty / clearness and filtrate throughput can be significantly improved.

Some of the most important and abundant bacteria of the natural kefir microbial community in kefir grain-based beverages are very slow growing species and thus not used in industrial relevant starter cultures. This invention provides a method to use these species in a way that making the production of a synthetic kefir feasible for industrial scale production reassembling the organoleptic and health-benefiting characteristics of home-made kefirs more closely than current industrial kefirs.

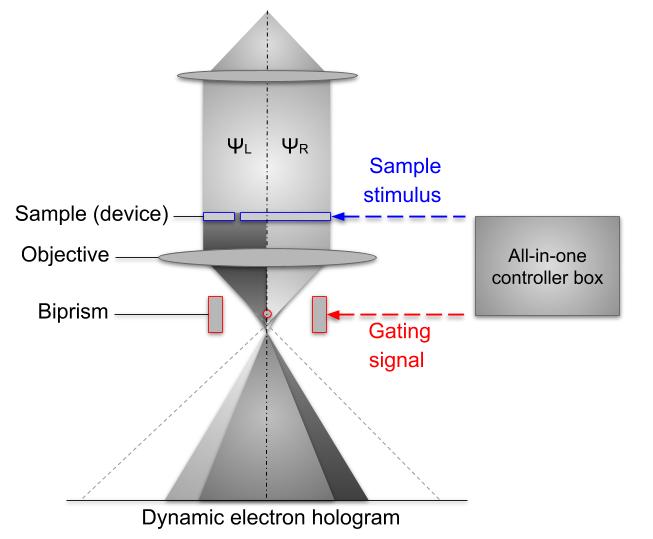

Thisinvention enables the spatially and timely resolved measurement simulatneously of electronic and electro magnetic processes in a TEM.

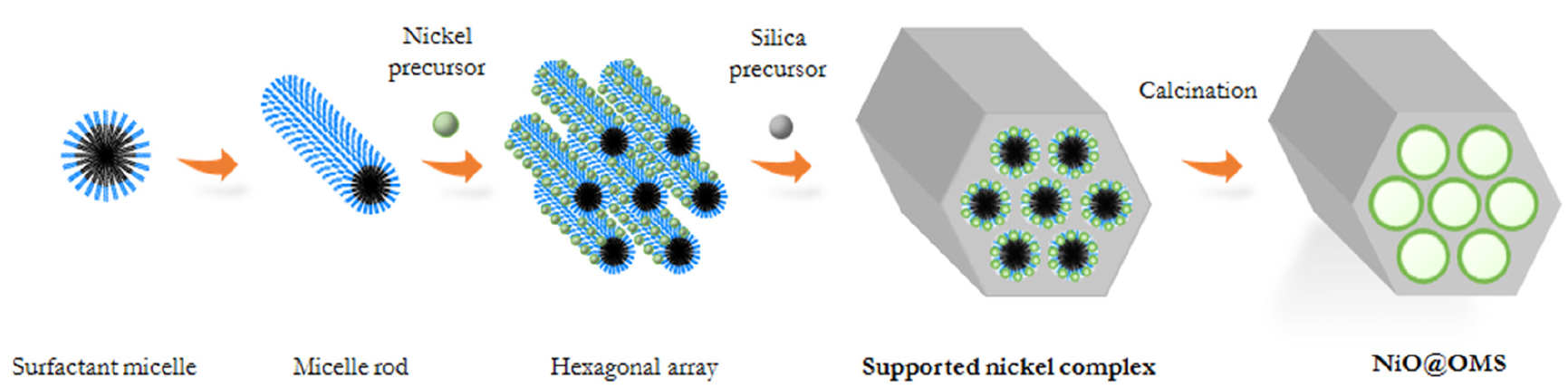

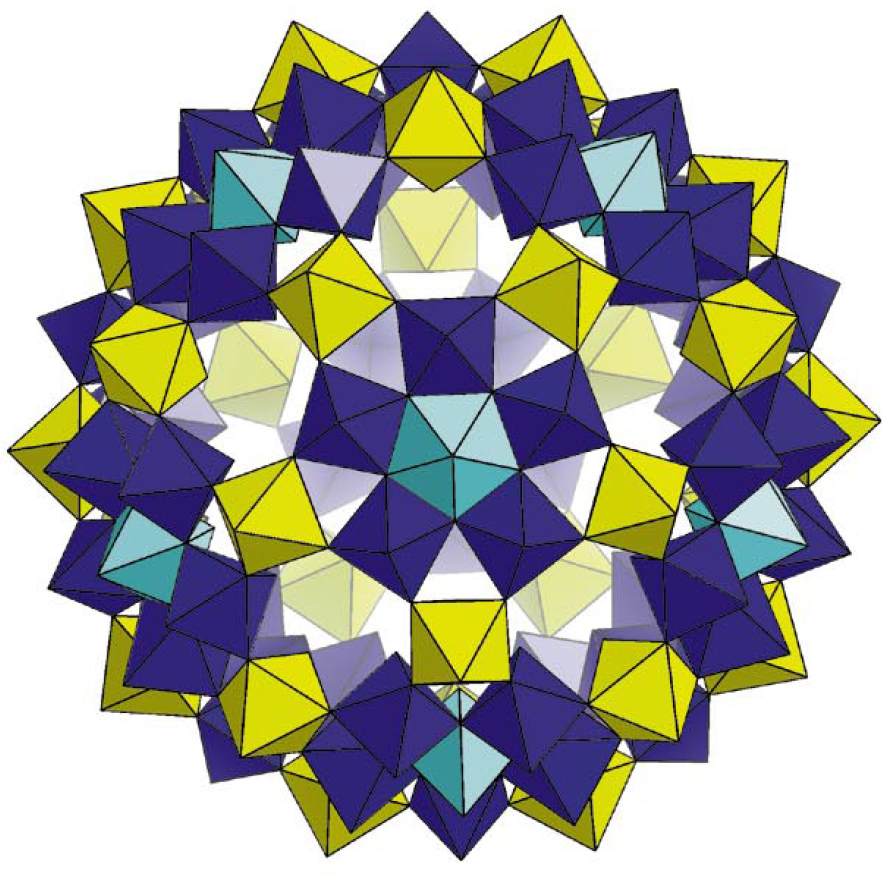

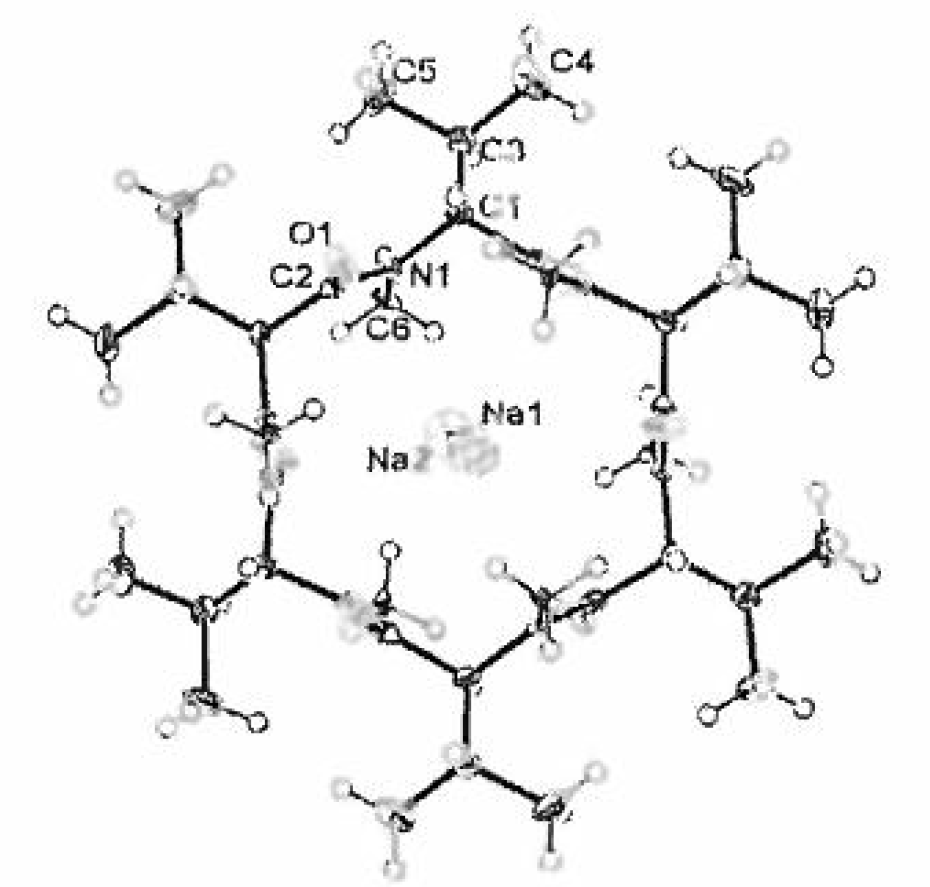

This invention enables an easier production of ordered mesoporous silica structures even at room temperature.

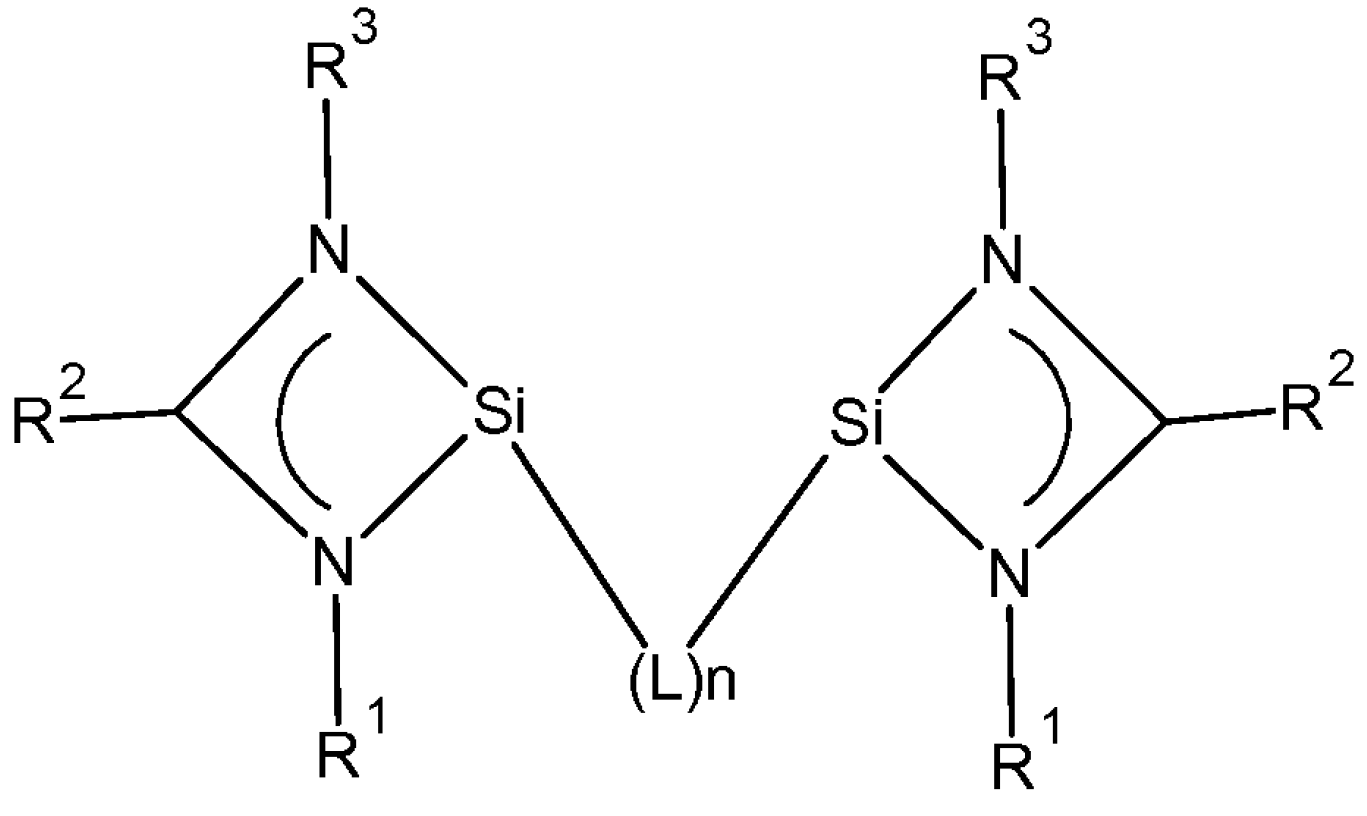

This invention enables a simplified hydroformylation with a novel catalyst including ligands based on silylenes.

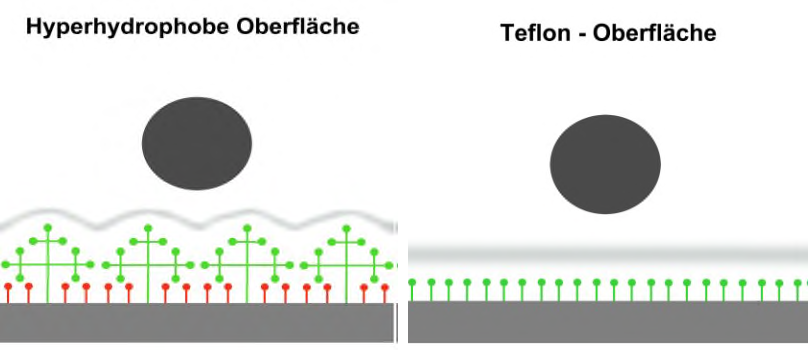

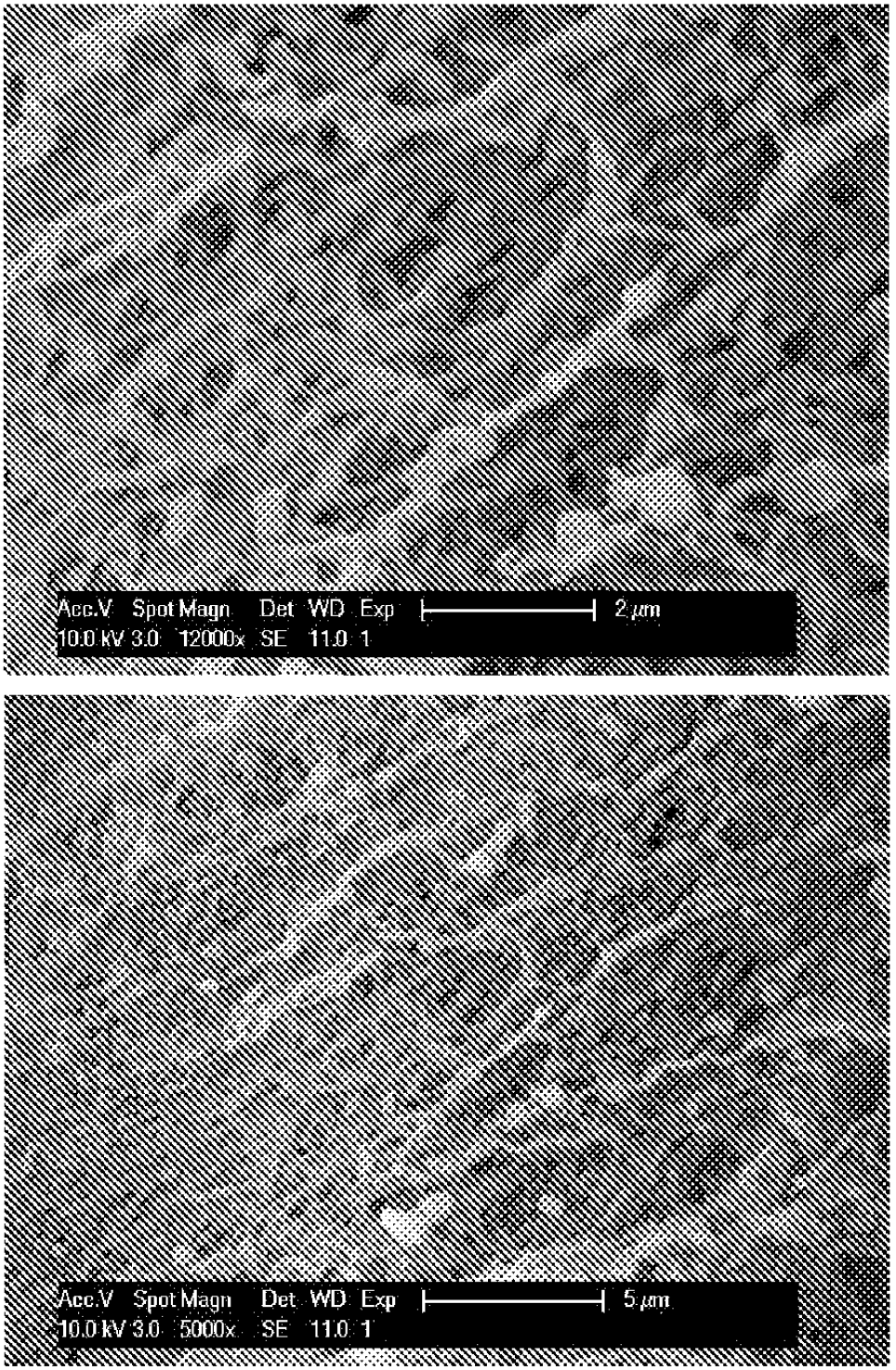

This invention enaböes the inertion of various material surfaces for improving their resilience against soil and wear.

This invention enables the easier manufacturing of porouse silicate particles as hostmaterial for most industrial catalysts.

This Invention describes the composition of an UV-LED operatable in lower wavelengths.

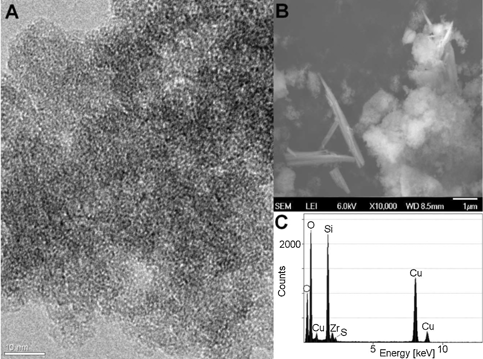

This Invention enables the water treatment from hard to remove substances like drug residues and Hormone like substances by photocatalytic iron-molybdenum clusters.

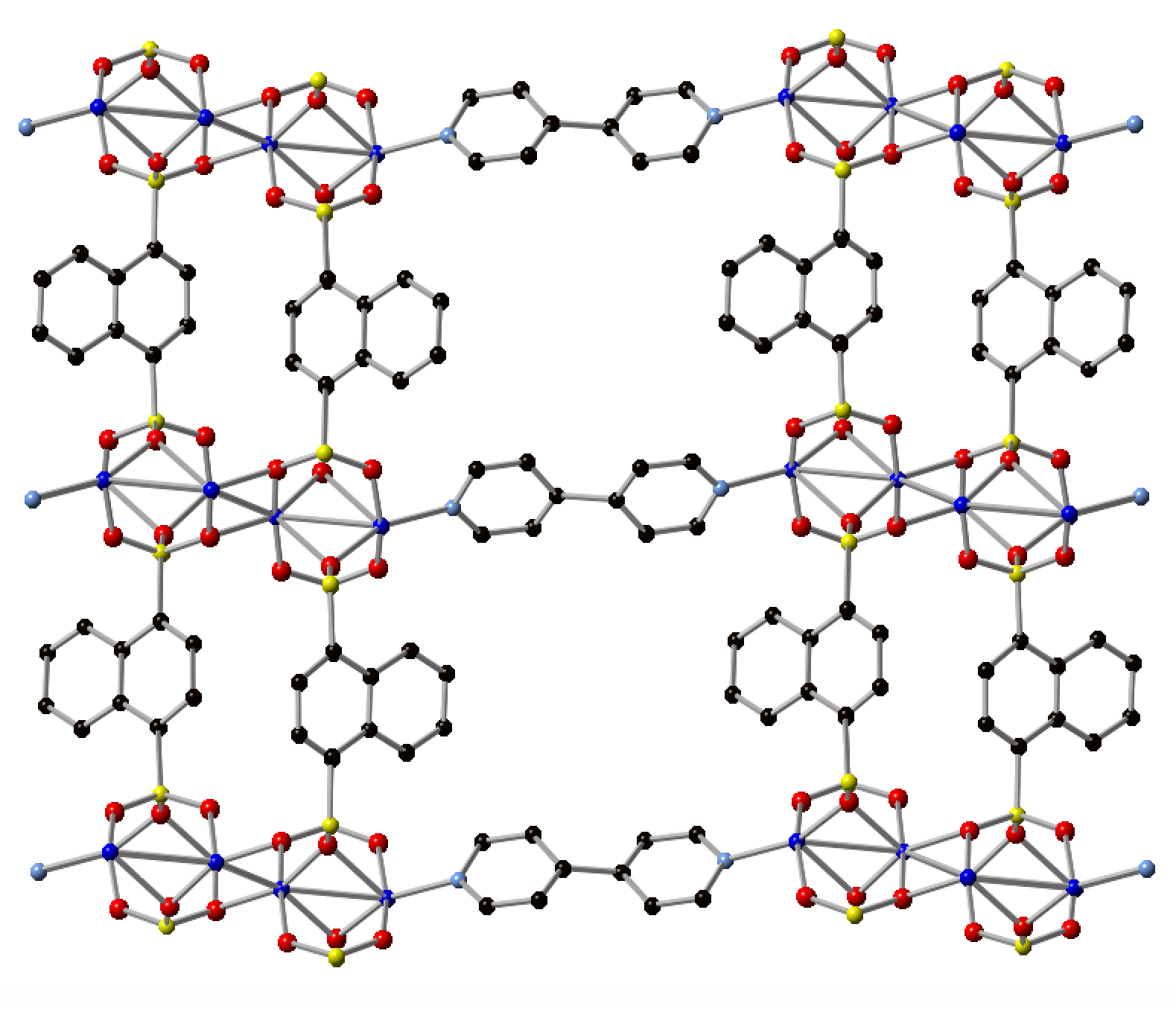

This Invention describes the usage of a novel semiconductor MOF comprising Phosphonate and Arsenate for a novel electrode material used in super capacitors.

This invention enables the production of an oncolytic virus variante, enabling a novel specific treatment of malignant cancer. (in vivo PoC, TRL 5)

This Invention enables a possibility for manufacturing Metall effect plastic articles without visible flow lines.

This Invention enables to renounce diatomeceous earth while maintaining further plant infrastructures of the precoat filtration mainly used for beverage productions.

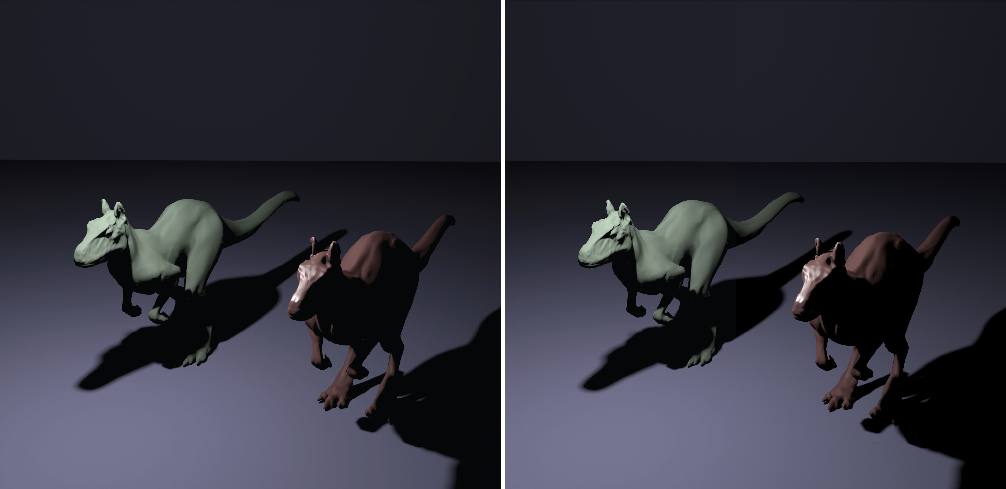

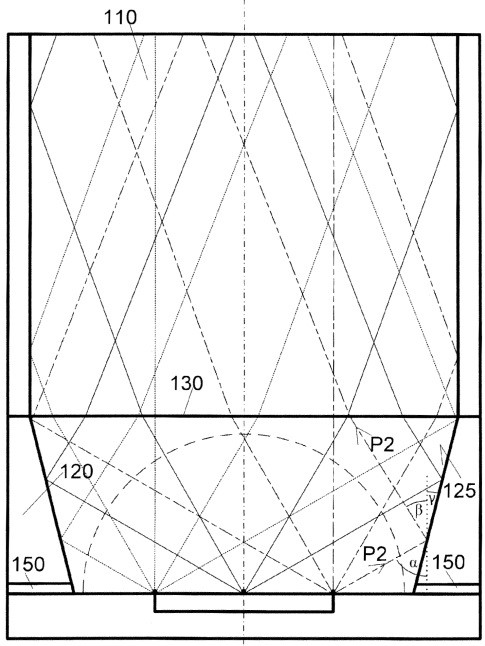

This Invention enables improved simulations of lighting in three-dimensional environments while staying computational efficient.

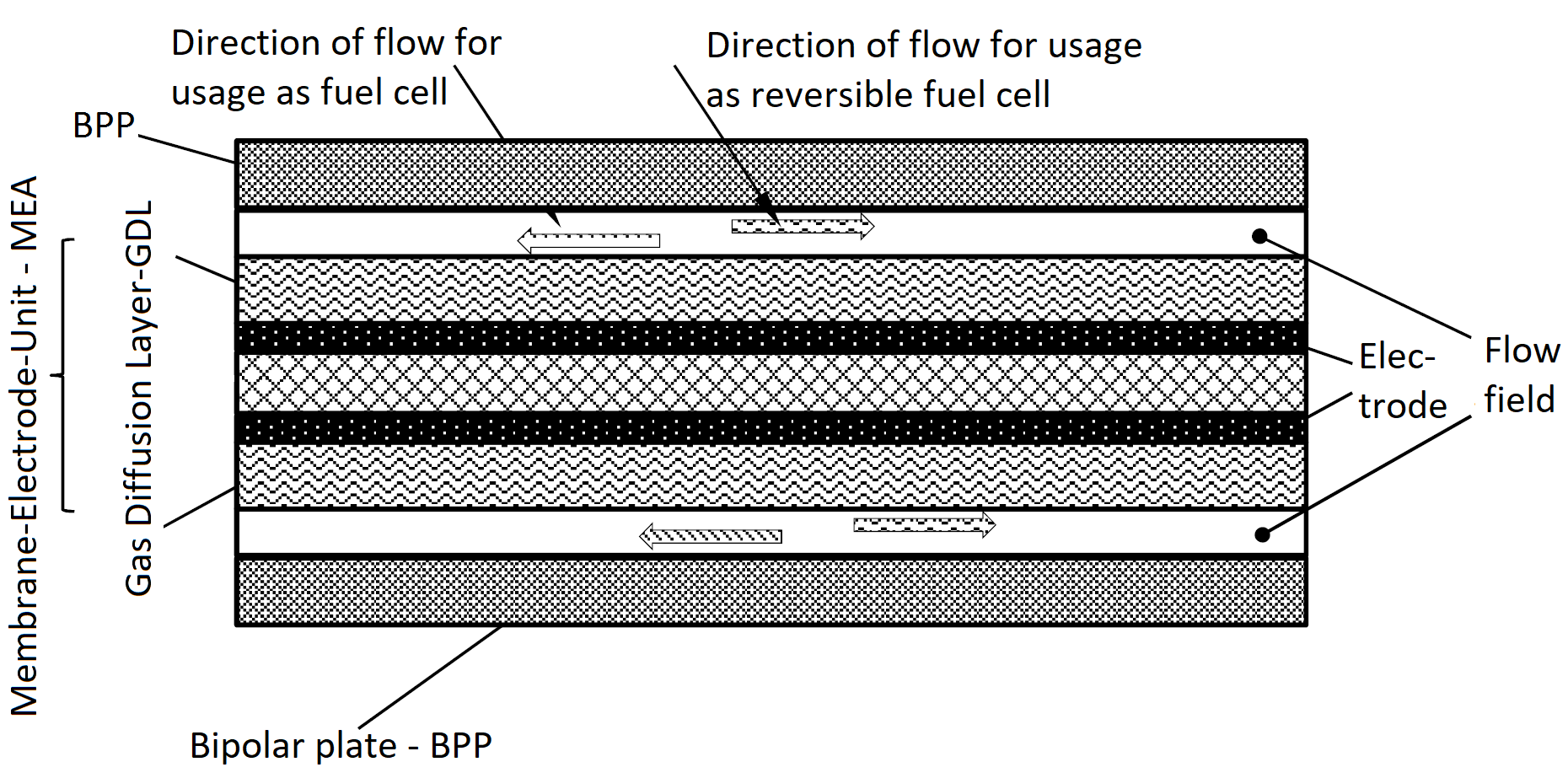

This Invention enables an improved catalysis of commonly used reactions in fuel cells and electrolyzers as well as an easier manufacturing process for this catalyst material.

This Invention enables resolutions in the picosecond scale ant herefor the investigation of dynamic processes in a TEM.

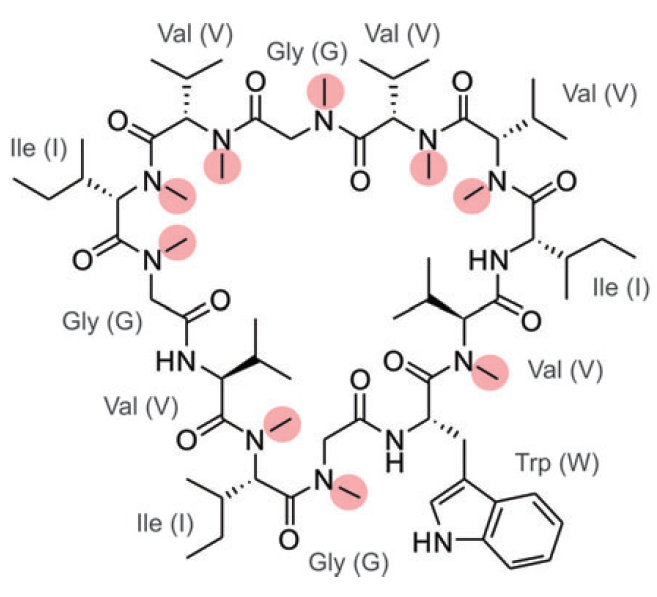

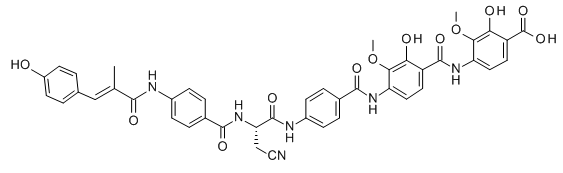

This Invention enables the Synthesis of Omphalotin A and modified secundary metabolites for the development of novel antibiotics.

This Invention contains the process of synthesis of secondary metabolites in fungal host organisms for the production of novel antibiotics.

This Invention enables a simplified catalysis of polylactic acid for producing PLA.

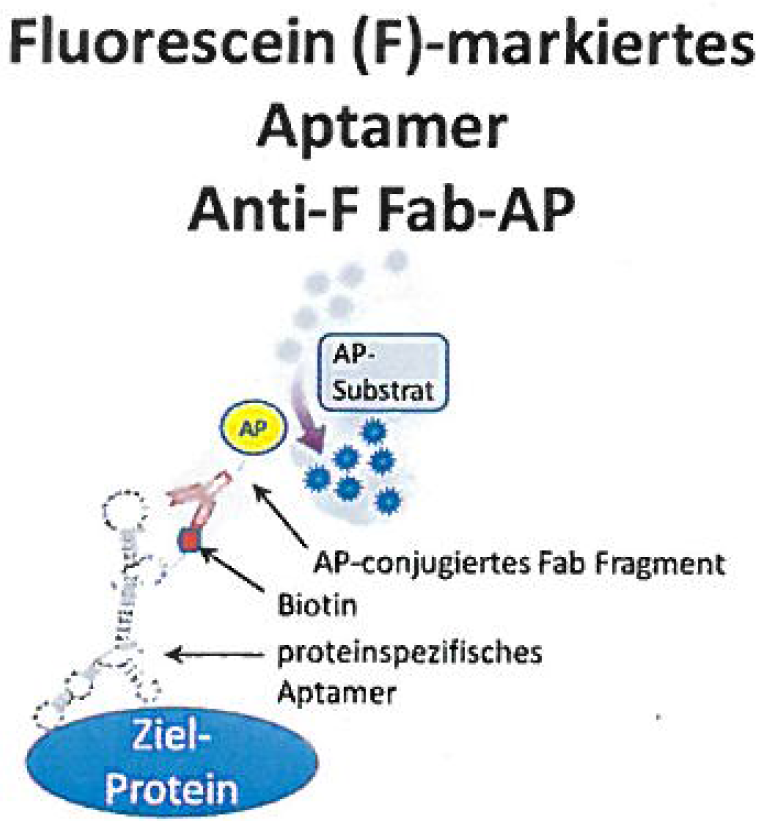

This Innovation enables the easy marking and separating of different Proteins in a single sample.

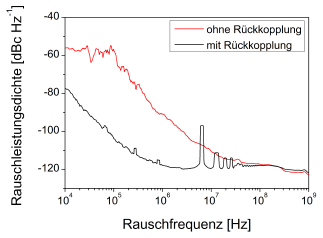

This Invention enables the easier manufacturing of clocks for signals for high frequency data Transmission with less jitter.

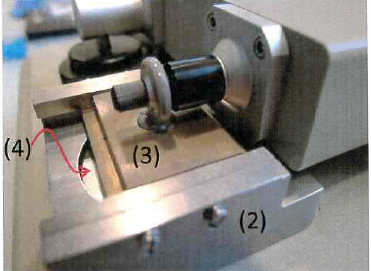

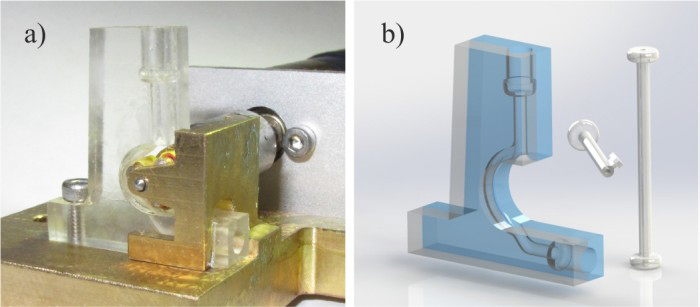

This Invention enables the preparation of wedge-shaped samples for the TEM with common lab equipment.

An enhanced electrode for fuel cells and electro catalysis and the manufacturing process needed.

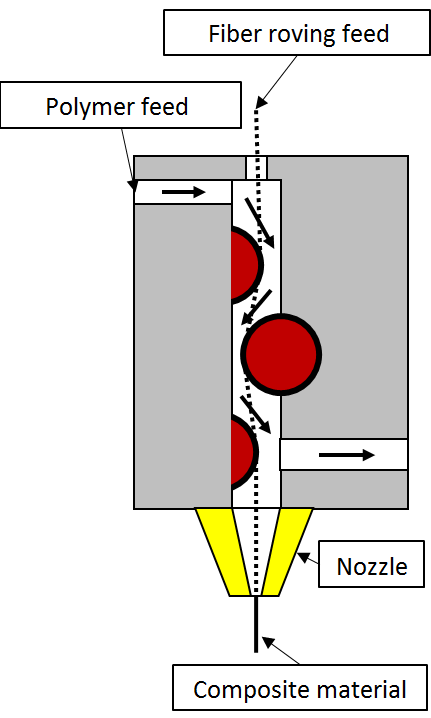

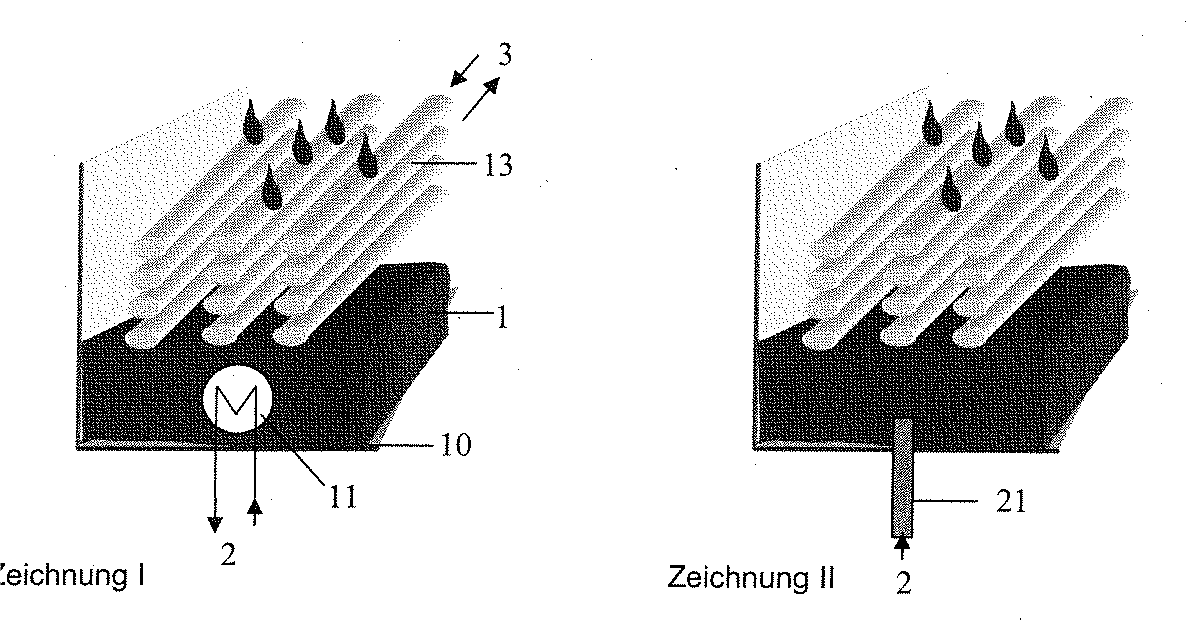

A device and method for constructing thermoplastics reinforced with continuous filaments.

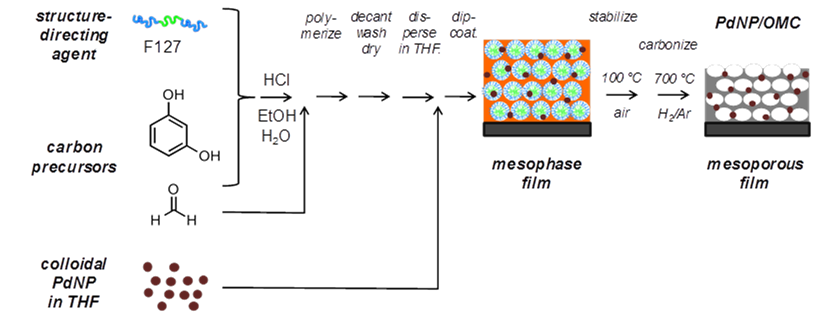

Production method for mesoporous carbon coatings incorporating noble metal nano particles.

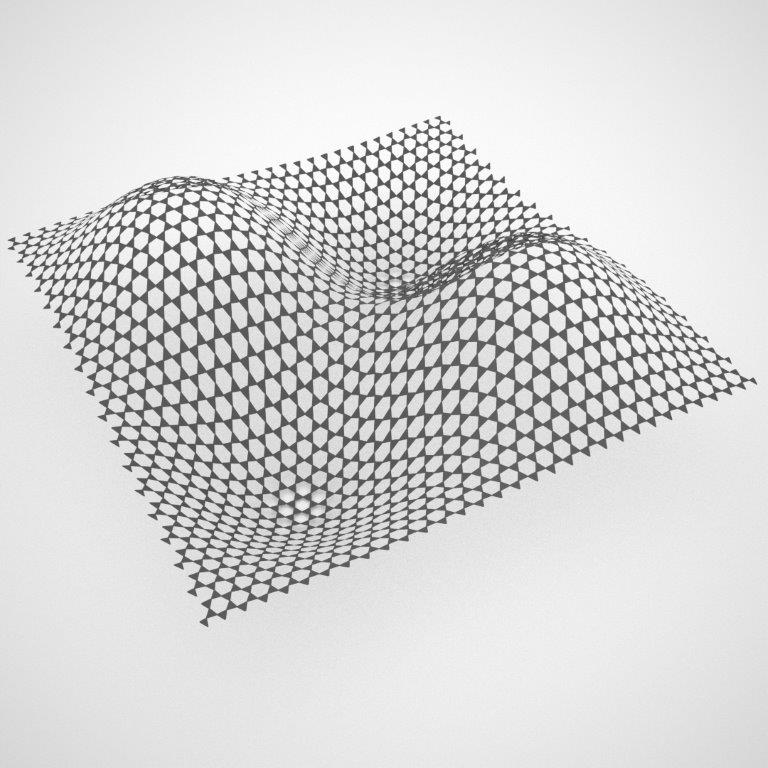

The Invention enables the production of form giving structures by using auxetic materials for realization of otherwise hardly producable components.

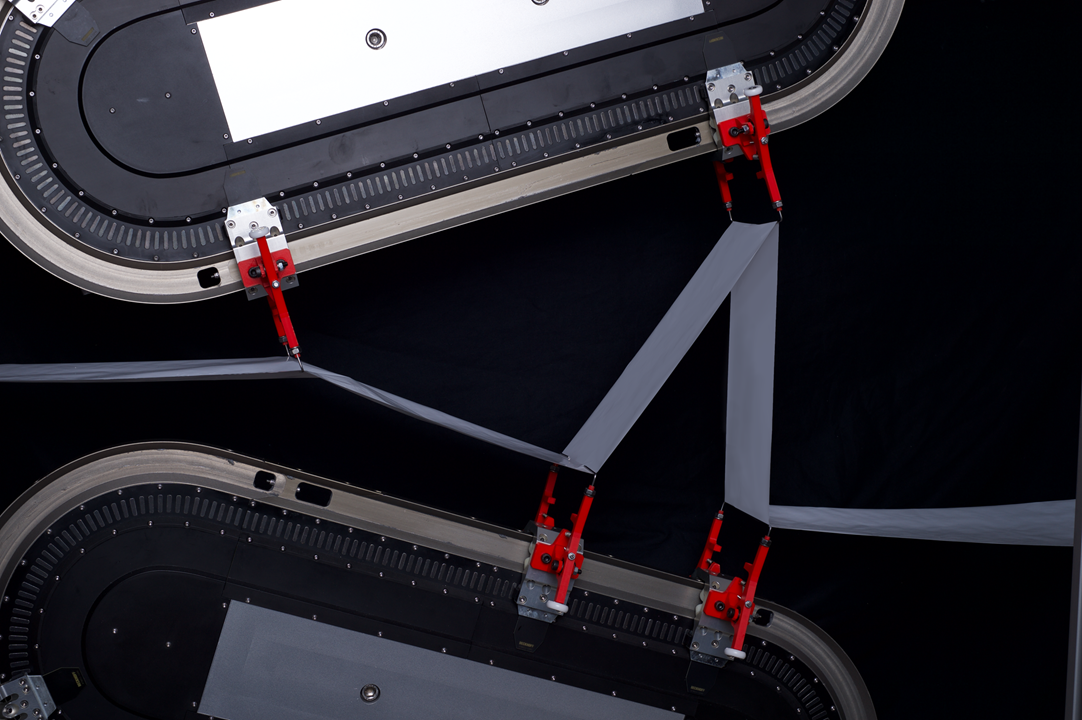

The Invention enables the continuous Z-folding of a electrode-seperator-composite for faster and cost-efficient production of battery-cells.

This Invention enables a novel less invasive Method for determining the EAP-values of Drinks.

This invention offers the highly precise acquisition of several bioelectric and biooptic brain and body signals simultaneously and is used in research and clinical processes as well as in automotive safety electronics or virtual reality applications.

The method uses electromagnetic radiation to determine oxygen saturation in the blood more accurately than conventional methods.

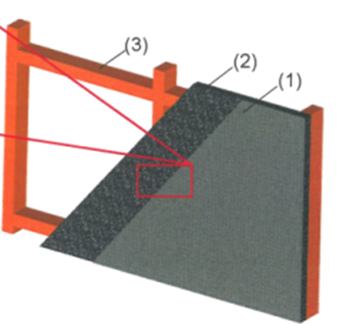

This invention enables the production of concrete with improved surface quality, so structural defects such as pores in the concrete surface can be avoided.

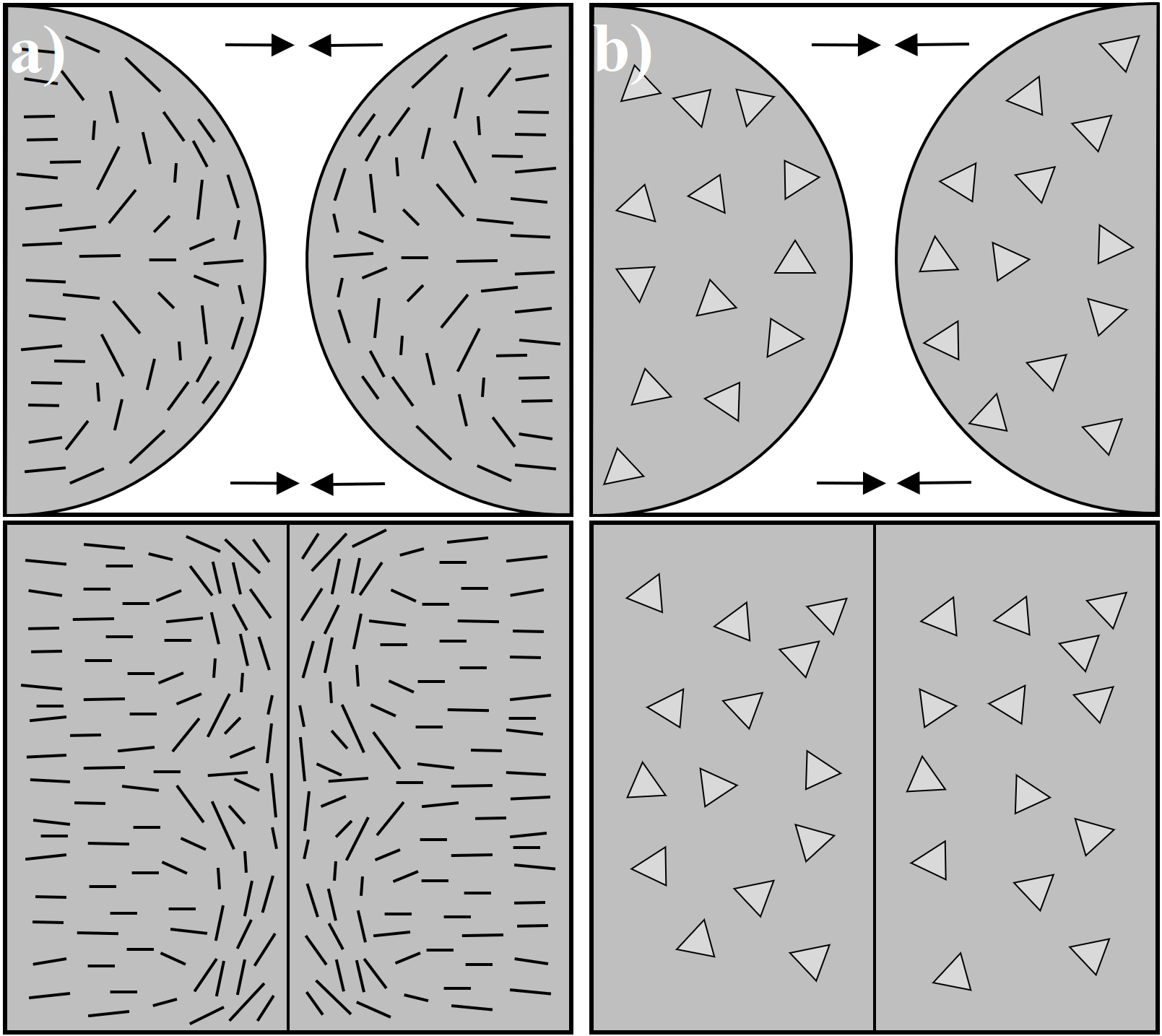

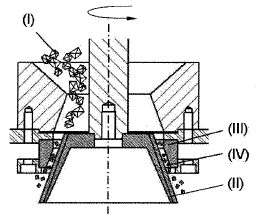

The method enables a universal, fast and easy evaluation and determination of abrasive grains and is used in the production of abrasive grains and abrasives.

This invention enables the production of improved magnesium alloys, which are particularly interesting as lightweight materials in the automotive, aerospace and medical technology industries.

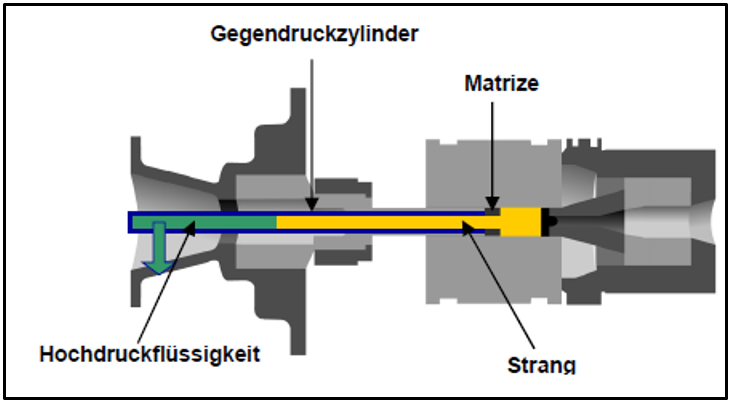

This invention enables the production of tailor-made extruded profiles for the forming of metals, for example in the production of pipes and the like. The process can be used in the automotive or aerospace industries.

With the invention of the SOS system, it is possible to skim oil slicks from the surface of the water, i.e. to absorb them, even in heavy seas.

Scientists from TU Berlin develop an improved method for the production of a beverage having an ethanol content of under 0.5 vol%.

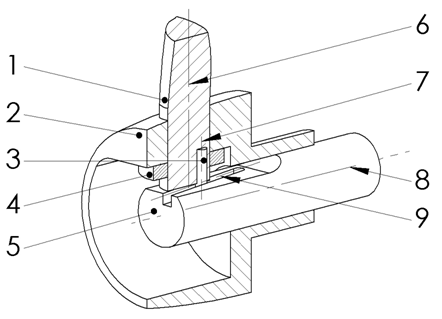

This invention enables fast, precise and highly dynamic dosing of the smallest quantities of liquid, as required, for example, in medical technology.

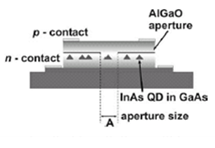

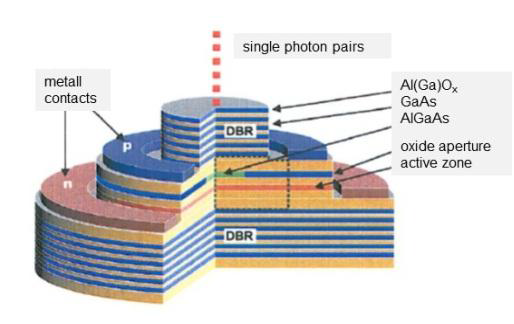

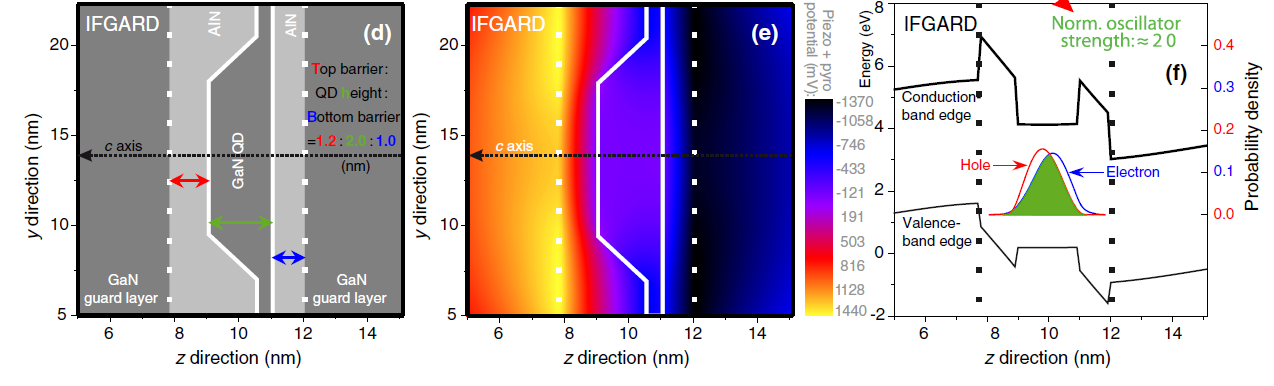

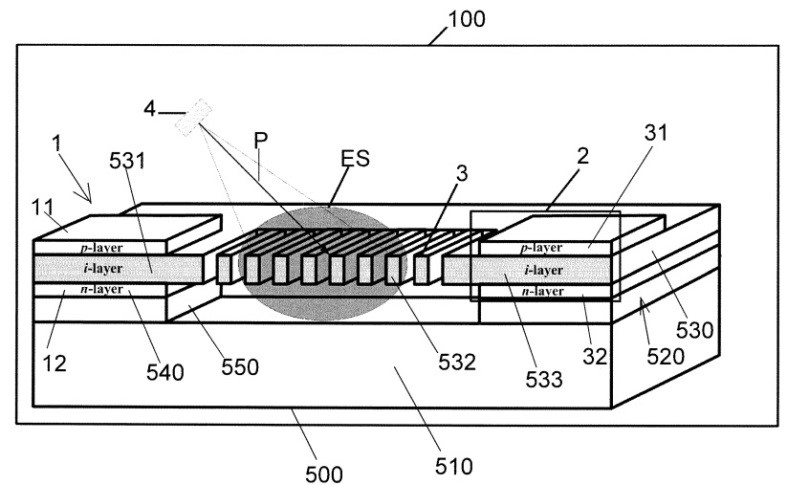

The invention enables a simple and reproducible process for the production of a single or an entangled photon source, as used in quantum cryptography, the security industry or in communications and information technology.

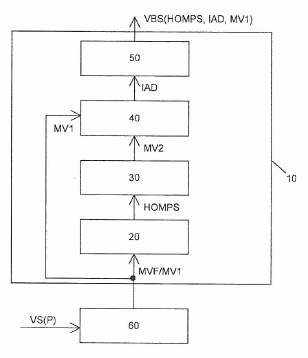

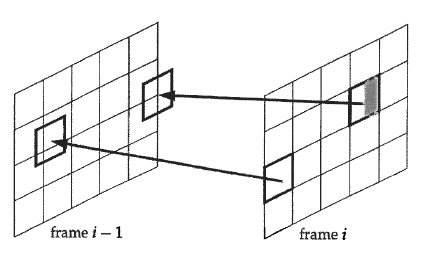

This method is used in the video software industry or in online video portals and enables, among other things, data rates in video coding processes to be reduced but not increased.

This invention can be used, for example, in the video software industry or in online video portals and enables the reduction of bit rates, improved image quality and improved noise suppression.

With this invention, measurements are possible wherever installation heights are low, for example on sports equipment, road and rail vehicles or even construction machinery.

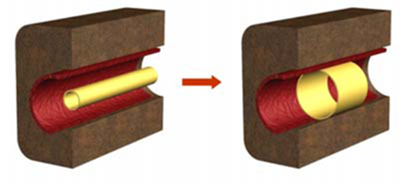

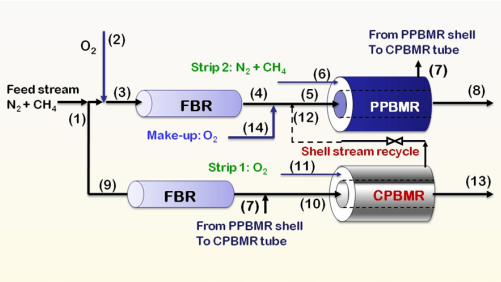

The fluidized bed membrane reactor can be used in the chemical industry and is suitable for the oxidative coupling of methane and heterogeneous catalysis.

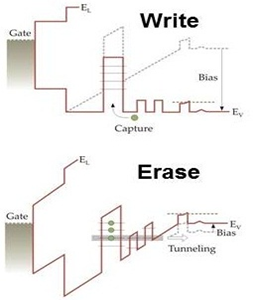

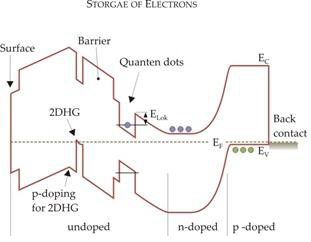

This innovative memory cell offers both high performance and long life and can be used in the computer industry, optoelectronics or consumer electronics.

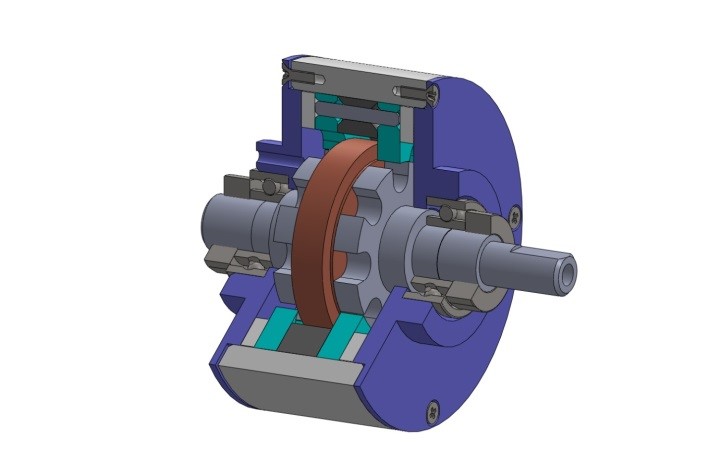

The innovative micropump enables precise dosing and exact control of the smallest quantities of liquid in the nanoliter range, as is often required in applications in medicine, biochemistry or chemistry.

With this method, light emitting devices as well as single photon emitters can be constructed by producing layers with locally arranged nanostructures, and quantum cryptography and communication are also made possible.

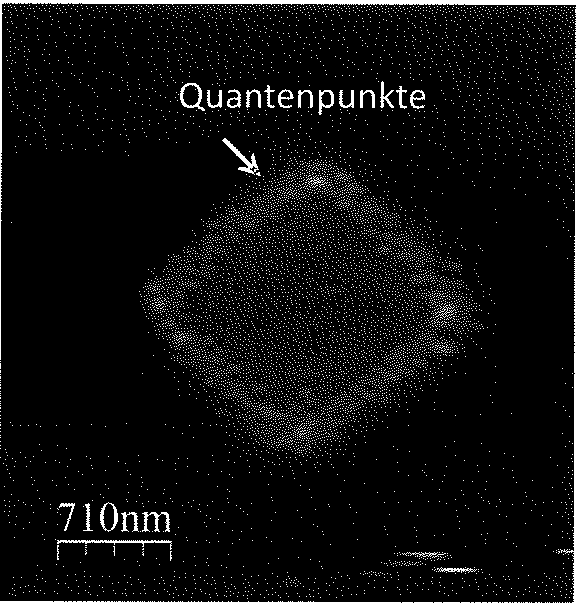

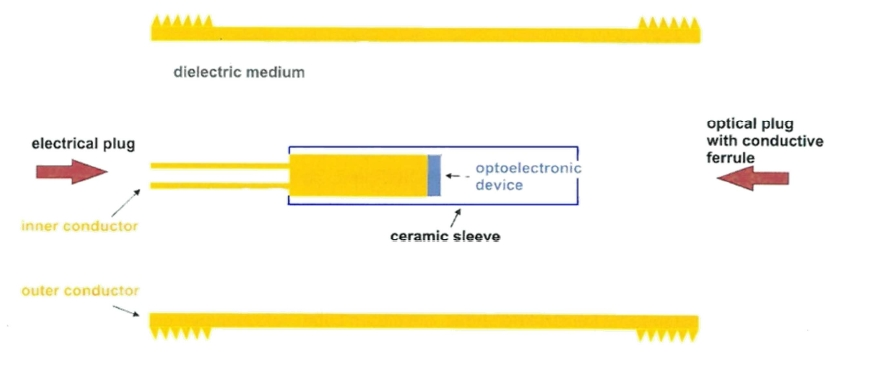

This memory cell serves as an optoelectronic device for data storage and can be used, among other things, for quantum cryptography and data transmission as used in the communications industry.

The presented memory cell is suitable for optoelectronic devices and for data storage. It can be used in the computer industry, optoelectronics or consumer electronics, for example.

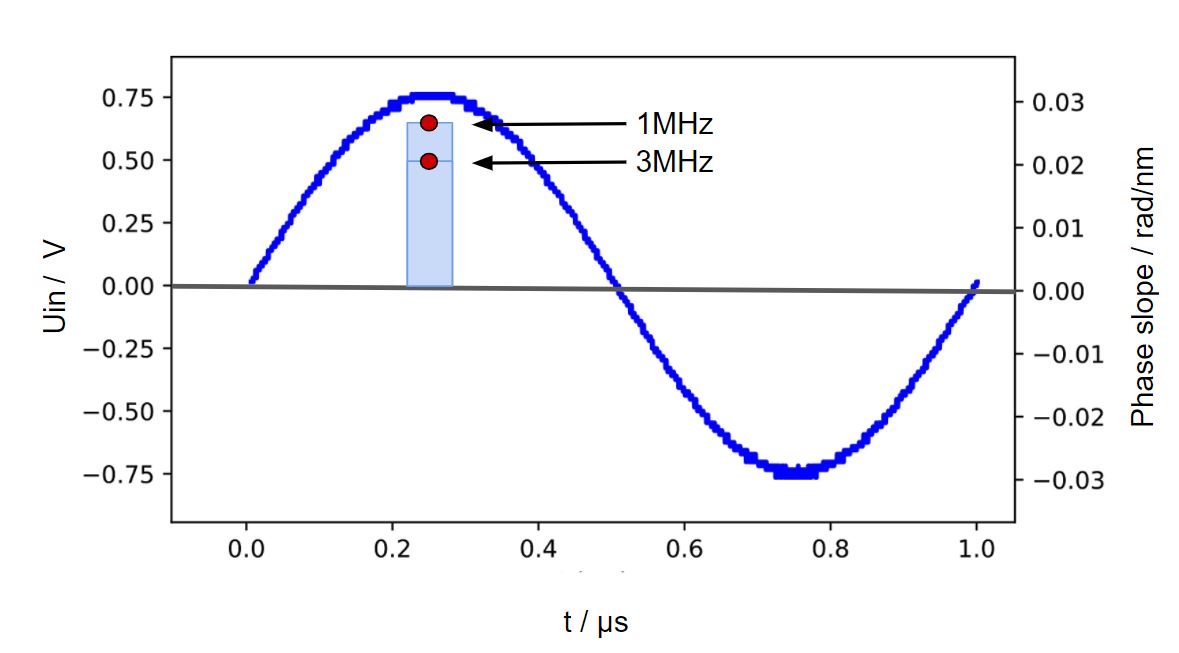

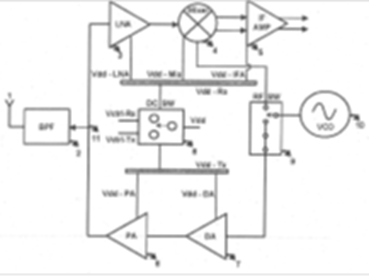

This invention can be used for various positioning systems, e.g. in museums or harbours, distance measurement for vehicles or any wireless data transmission. The novel radar system enables the realization of highly integrated circuits, accompanied by improved insulation properties of the switching processes, as well as improved transmission and reception powers.

In case of tooth loss, this invention enables the production of easily available, long-term stable and physiological grafts. The germ that induces later tooth growth is cultivated from human adult stem cells. -> ! This technology is not yet available for patients and will not be in the foreseeable future. ! <-

Unlike conventional drug-coated stents, which are used to maintain or restore the size of vessels in the human body, this novel microstructured polymer stent prevents late effects of stents such as increased risk of bleeding, acute intoxication or the formation of tumors.

This invention is used in the chemical industry and enables the oxidative coupling of methane and heterogeneous catalysis.

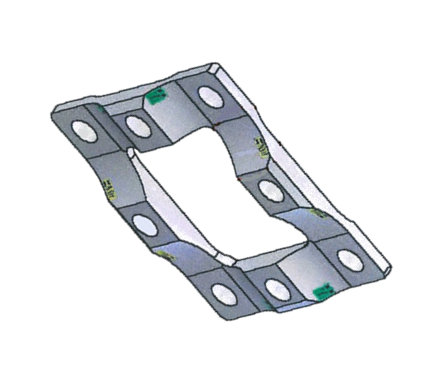

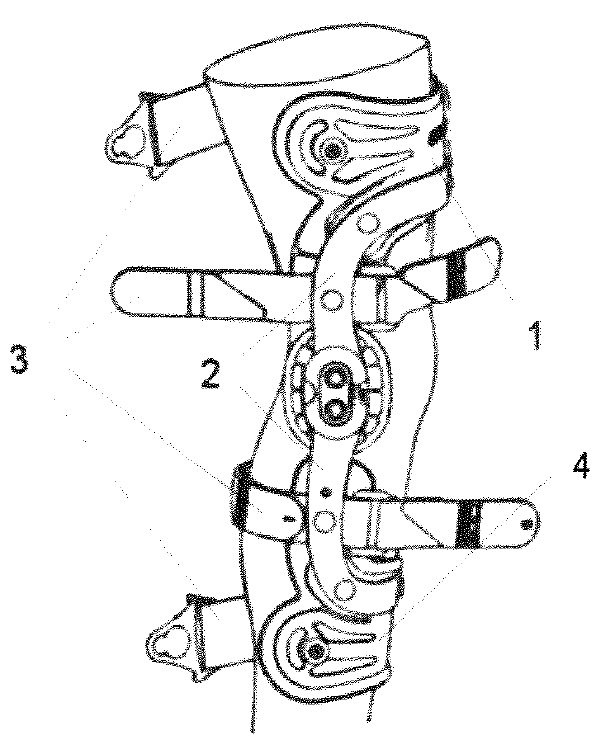

This new type of knee orthosis prevents the orthosis from shifting between the upper and lower leg segments during wearing due to axial incrugation, as is often the case with common knee orthoses. In addition, it minimizes both the constraining forces and the strain on the soft tissue.

This invention, which is suitable for data storage and memory cells, enables long-term storage at fast write speeds.

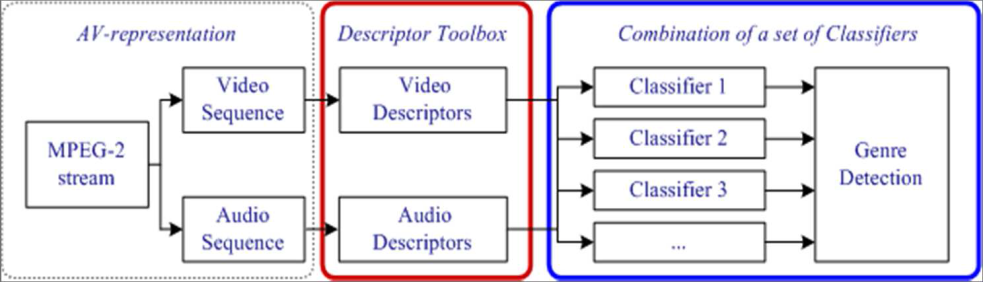

This technology offers the possibility to classify videos according to the respective genre (e.g. "Cartoon" and "Non-Cartoon"). This can be used both for private home use and for the automatic classification of audiovisual data for the Internet and search engines.

This invention offers a process for the production of a photon pair source that generates interlaced photon pairs in a simple and reproducible manner and is used, for example, in the security industry or communications and information technology.

This invention enables an improved purification process of malt and is used, for example, in the beverage industry (especially the beer industry) to reduce process time and improve the oxidative stability of beverages.

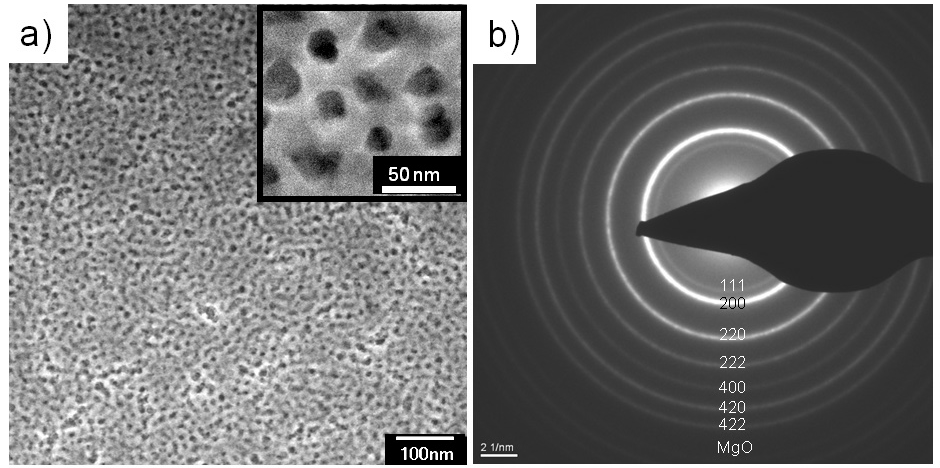

Metal oxides are required in many industrial processes. This invention provides a method for the synthesis of mesoporous, nanocrystalline magnesium oxide and magnesium carbonate films using micelle plates.

The invention comprises a process for improving the quality of malt so that the iron content in malt and beverages, for example, can be reduced.

This invention offers a novel technology to increase the efficiency of cooling systems.

This new type of freight car can be used in freight transport, transport, the railway industry or logistics and is more energy-efficient than conventional models due to its aerodynamically advantageous design, for example.

This new type of liquid distributor is used, for example, in absorption chillers and can replace systems that were previously more complex and more susceptible over the operating time.

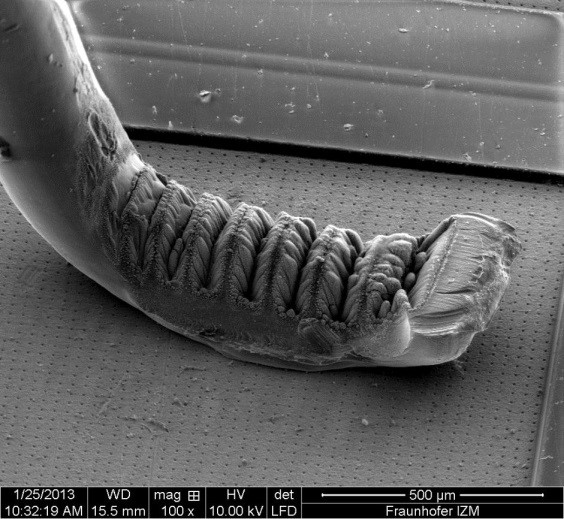

This technology can be used in the production of a wide variety of electrical connections; wire bonding is used, for example, to connect the chip to the electrical connections of the chip package, such as a semiconductor, an LED or a sensor.

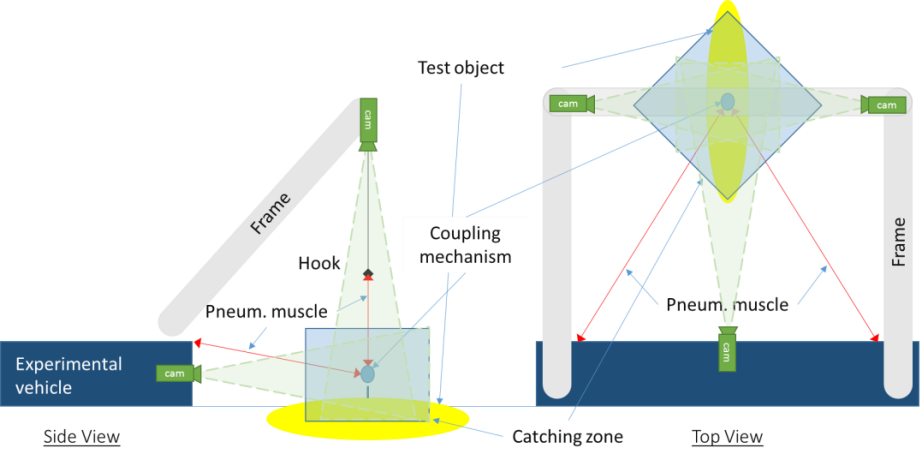

As an essential component of the so-called virtual reality (VR), this invention offers the development of a mobile haptic device with high dynamics, large displayable forces, large working space and excellent spatial resolution. It can be used for example in product development or interactions between humans and 3D environments.

Bonding is used in particular to create the visible connections in an electronic circuit with the board and thus to create contact points. This invention offers a non-destructive test procedure that can determine both simply and reliably whether a correct bond connection has been created.

This invention offers a generator that converts mechanical energy into electrical energy and is used, for example, in electronic door locking systems and energy conversion systems.

This invention provides a way to purify wastewater from synthetic substances as well as bacteria or viruses.

This invention provides a control method for wind turbines to adapt their overall performance to the prevailing operating conditions.

In the course of the use of renewable energies, this invention offers an approach for an emission-free energy storage.

This invention is used to lift people, vehicles or other objects that have gone overboard out of the water.

This invention offers a novel universally applicable technique for image and video coding.

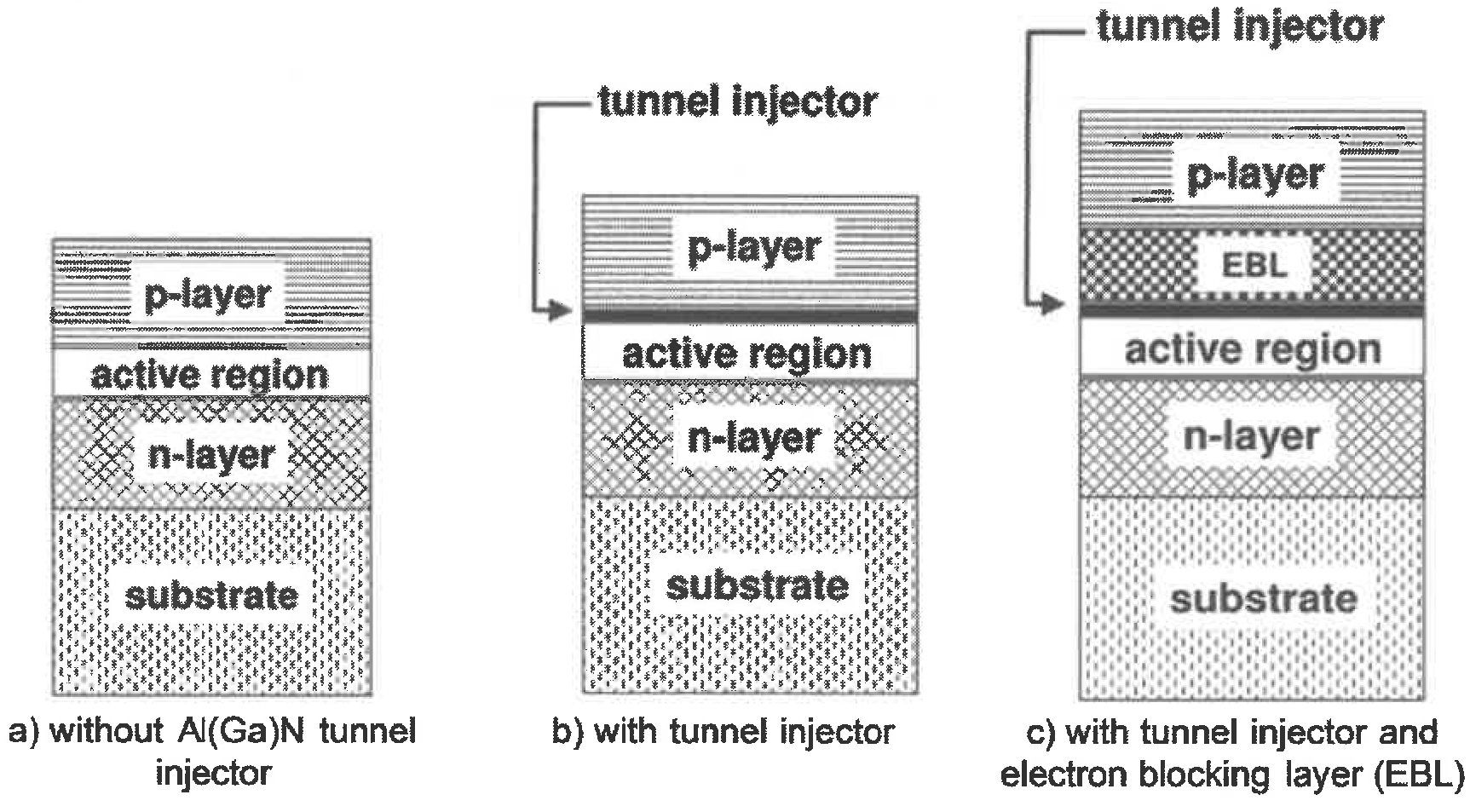

This invention comprises a so-called semiconductor layer sequence, which can be used in LEDs, for example.

This invention improves the performance of LEDs and can be used in medical devices or endoscopy, for example.

This novel optical sensor, which has a very high sensitivity, can be used in biomedicine, biochemistry, pharmacy or environmental monitoring.

This invention is used, for example, in telecommunications and enables fast but also secure transmission of optical signals.

This invention enables the production of albicidine, which could form the basis for novel, highly effective and bacteria-resistant antibiotics.

This invention comprises the production of a novel probiotic, protein-rich and fermented sports drink based on malt barley seasoning.

This invention is used in the chemical industry and offers a method for the production of hydrosilanes without safety problems.

This bioadhesive can be used in medicine to treat bone fractures or in wound treatment.

This invention enables efficient processing of pixels to increase the quality of a video sequence. The procedure is interesting for video sharing websites, online video-on-demand platforms or streaming media providers.

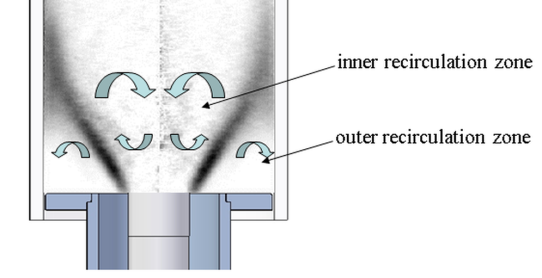

For the first time, this method offers active control of chaotic combustion instabilities that can occur undesirably in various combustion systems.

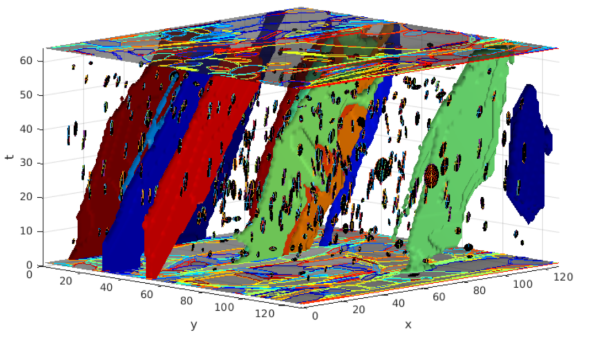

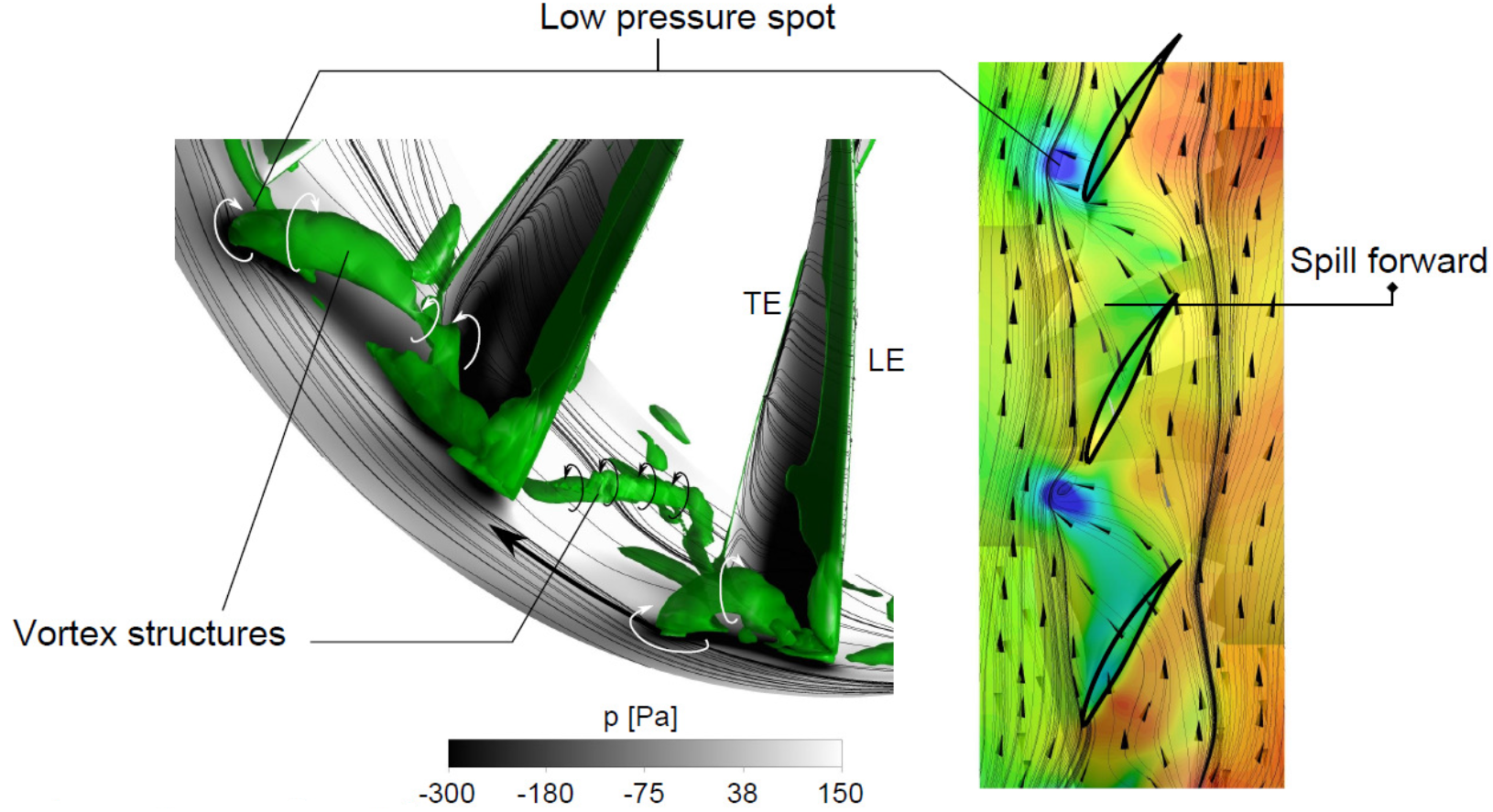

The invention enables the prediction of instability in a compressor, which allows an early warning in the event of a fault. The early warning system can be used in thermal turbomachinery, for example in aircraft engines.

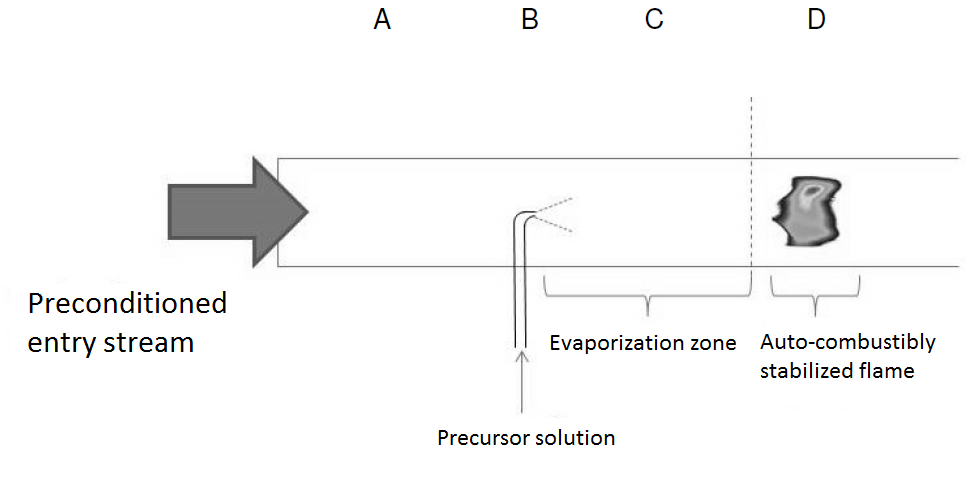

This process enables the generation of nanoparticles in spray flames which can be used, for example, as coating material, in electrical devices, for thermal conduction or insulation or as catalyst.

This innovative loop made of carbon fibers can be used, for example, as part of a supporting structure for structures such as bridges, tunnels, towers or also for mobile machines such as cranes, ships or wind turbines.

This invention comprises a novel coupling device for liquid and gaseous media that can be used, for example, in autonomous fuel transmission and refuelling of satellites.